Case Study

+940% Schema Integrity in 24 Hours

How Avante Visibility transformed SellerMockups.com from invisible to AI platforms to fully discoverable — deploying 6 schema types across 159 pages in a single day.

Before

5

/ 100 Schema Integrity

After

52

/ 100 Schema Integrity

Baseline: Avante Visibility GEO Audit v3.0 (April 1, 2026). Post-implementation: Claude GEO Audit (April 2, 2026).

The Challenge

SellerMockups.com is an AI-powered mockup generation platform with 159 pages, 79 blog posts, and thousands of product listings. Despite strong content, the site had zero machine-readable structured data — meaning AI platforms like ChatGPT, Perplexity, and Google Gemini could not understand its purpose, products, or credibility.

The result: 0% citation rate on commercial-intent queries like "best AI mockup generator for Etsy sellers" — the exact queries where new customers search. Competitors Dynamic Mockups and Mockey dominated at 70% citation rates each.

What We Implemented

Schema Markup (6 Types)

Deployed structured data across all 159 pages. Schema types include: WebSite, Organization, SoftwareApplication, FAQPage, BreadcrumbList, and VideoObject. This allows AI platforms to understand the site's purpose, products, pricing, and FAQ content in machine-readable format.

llms.txt + llms-full.txt

Created llms.txt (4KB) providing AI models a concise overview of the site's purpose and structure. Created llms-full.txt (22KB) with comprehensive product details, competitor comparisons, garment databases, blog index, and technical specifications. Both follow the emerging standard for AI discoverability.

ai-plugin.json

Deployed an AI plugin manifest file with schema_version, name_for_model, and description_for_model fields. This file helps AI assistants like ChatGPT understand the tool's capabilities and recommend it to users asking about mockup generation.

AI Crawler Access Configuration

Configured robots.txt to explicitly allow all 12+ AI crawlers including GPTBot, ClaudeBot, Claude-SearchBot, PerplexityBot, OAI-SearchBot, Google-Extended, Bytespider, Cohere-AI, and Applebot-Extended.

FAQ Content Architecture

Structured 110 FAQ sections with 970 question headings across the site in a format optimized for AI extraction. Added FAQPage schema markup to make this content machine-readable.

Contact Page & E-E-A-T Signals

Created a dedicated contact page to strengthen E-E-A-T trust signals. Provides users and AI evaluation systems a way to verify the business is reachable.

Before & After Scorecard

| Metric | Before | After | Change |

|---|---|---|---|

| Schema Integrity Score | 5 / 100 | 52 / 100 | +47 pts (+940%) |

| Pages with Structured Data | 0 / 159 | 159 / 159 | +159 pages |

| Schema Types Deployed | 0 | 6 | +6 types |

| llms.txt Score | Not detected | 92 / 100 | +92 pts (NEW) |

| llms-full.txt | Not detected | 22KB deployed | NEW |

| ai-plugin.json | Not detected | Live | NEW |

| AI Crawler Access | 98 / 100 | 98 / 100 | Maintained |

| Indexability | 97.5 / 100 | 97.5 / 100 | Maintained |

| Snippet Controls | 100 / 100 | 100 / 100 | Perfect |

| FAQ Sections Active | 110 (no markup) | 110 (with schema) | Activated |

| Question Headings | 970 (no markup) | 970 (structured) | Activated |

| Contact Page | Missing | Live | Added |

Key Takeaway

Schema Integrity saw the single largest improvement: from 5/100 to 52/100 (+940%). The site went from zero machine-readable structured data to 6 active schema types across all 159 pages. Combined with the new llms.txt (92/100) and ai-plugin.json, SellerMockups now has a complete AI discovery infrastructure that did not exist before this engagement.

AI Citation Rate Analysis

| Query Category | Queries | Cited | Rate | Status |

|---|---|---|---|---|

| Branded | 5 | 4 | 80% | WINNING |

| Comparison | 5 | 3 | 60% | STRONG |

| Commercial Intent | 10 | 0 | 0% | GAP |

| Informational | 8 | 0 | 0% | GAP |

SellerMockups is cited on 80% of branded queries and 60% of comparison queries — confirming AI platforms recognize the brand. The gap is in commercial-intent and informational queries, where competitors Dynamic Mockups and Mockey dominate.

Competitive Citation Comparison

| Site | Branded | Commercial | Info | Compare | Overall |

|---|---|---|---|---|---|

| SellerMockups | 80% | 0% | 0% | 60% | 25% |

| Dynamic Mockups | 20% | 70% | 25% | 80% | 50% |

| Mockey | 20% | 70% | 25% | 20% | 39.3% |

| Placeit | 0% | 0% | 0% | 0% | 0% |

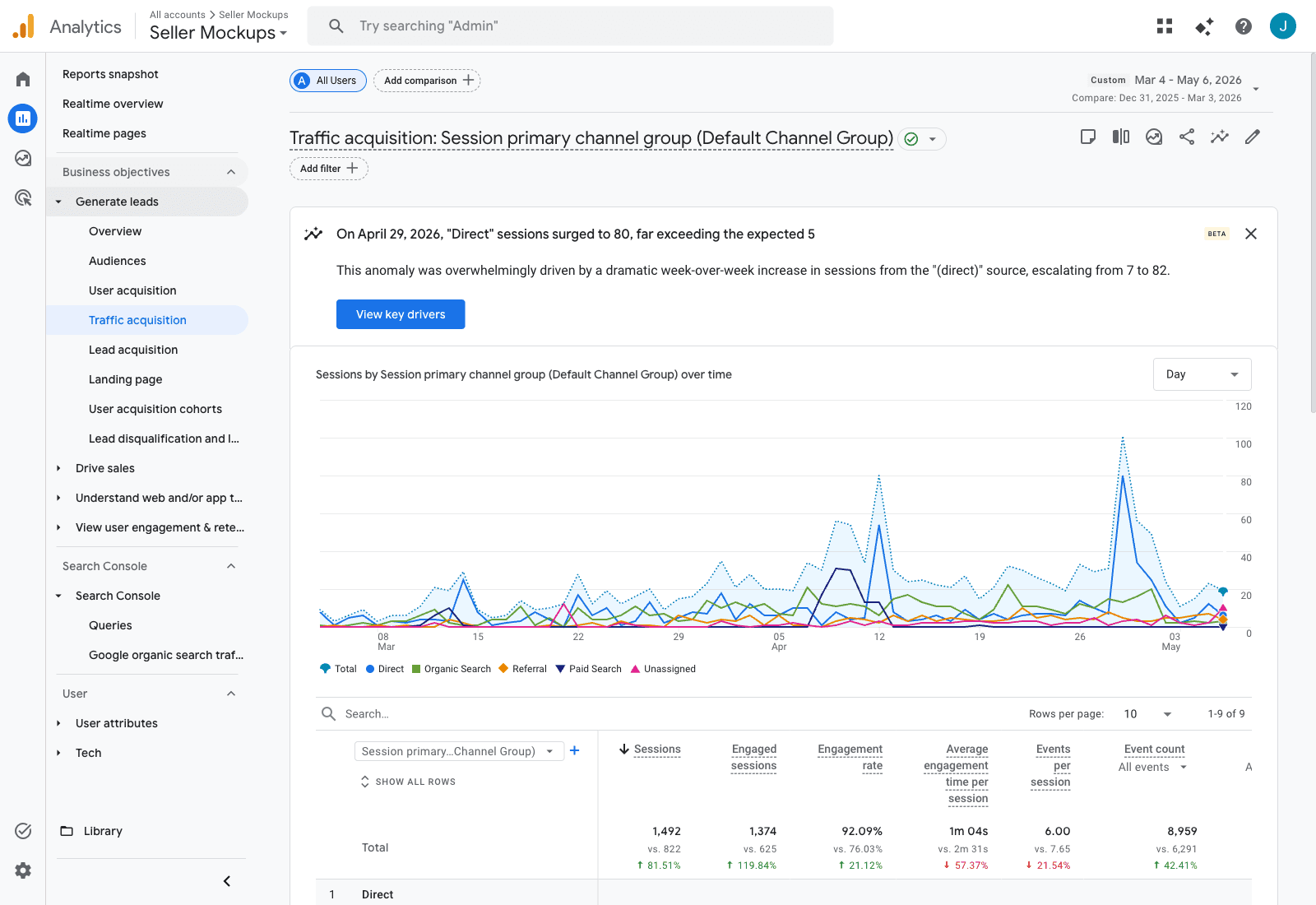

GA4 Receipts · Launch → Now

The Data Tells the Story

The 24-hour citation snapshot above was captured the day the implementation went live. To measure real downstream impact, we pulled GA4 data for the entire post-implementation period: Mar 4 – May 6, 2026 (64 days, from launch through today) versus the equivalent Dec 31, 2025 – Mar 3, 2026 pre-audit window (63 days). Same GA4 property, apples-to-apples, no marketing spend changes. Every chart below is a direct GA4 screenshot.

GA4's own anomaly detection flagged April 29, 2026 (top of report): “Direct sessions surged to 80, far exceeding the expected 5”— one of multiple post-audit anomalies. Total sessions +81.51%, engaged sessions +119.84%, engagement rate 76.03% → 92.09%.

224

AI-platform sessions

Mar 4 → May 6

+984.78%

Organic search

46 → 499 sessions

+81.51%

Total sessions

822 → 1,492

+119.84%

Engaged sessions

625 → 1,374

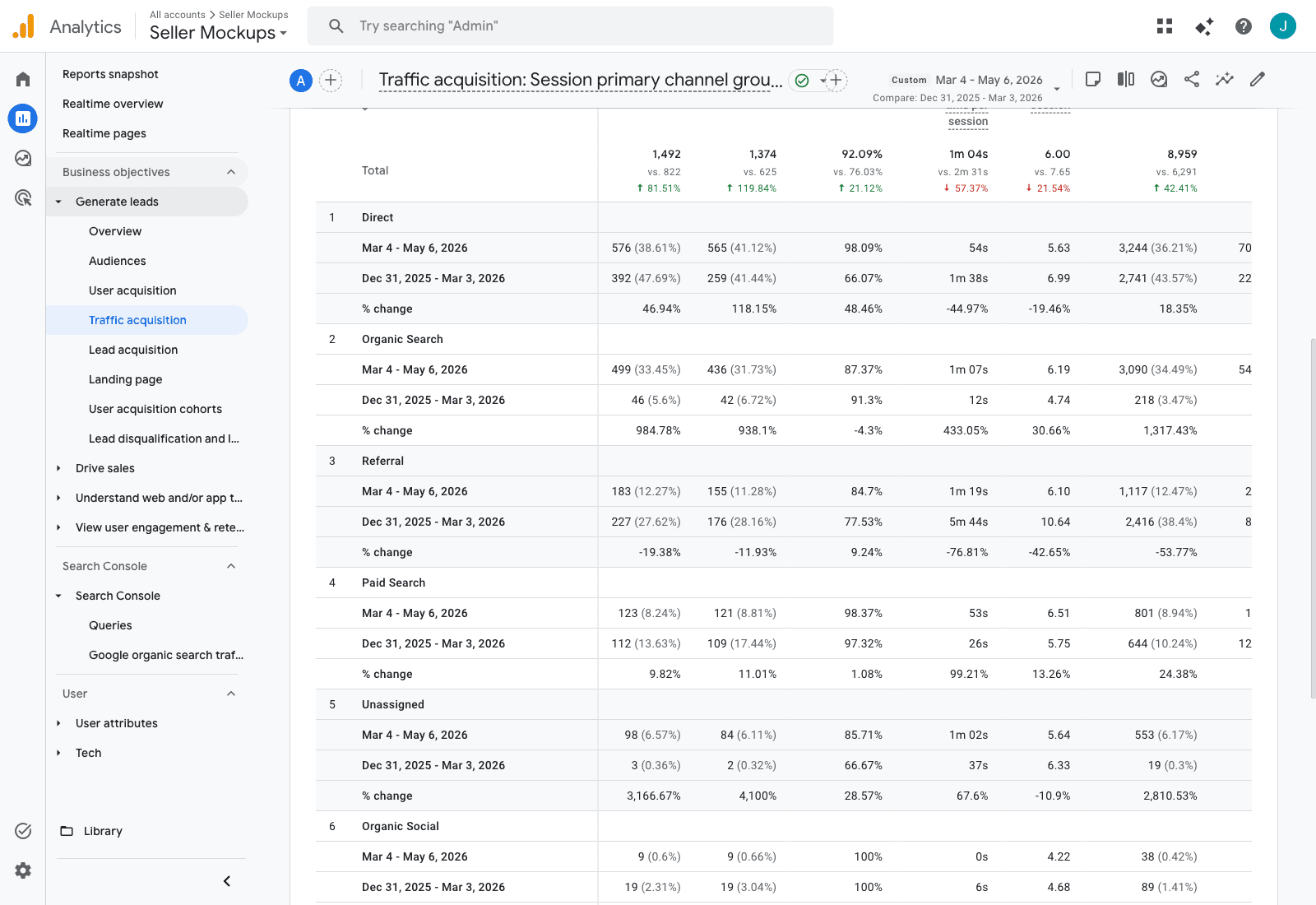

Channel Breakdown — Where the Lift Came From

GA4 Traffic Acquisition report, default channel grouping. Each channel shows post-audit (top row) vs pre-audit (second row) and % change.

| Metric | Pre-Audit (63d) | Post-Audit (64d) | Change |

|---|---|---|---|

| Total Sessions | 822 | 1,492 | +81.51% |

| Engaged Sessions | 625 | 1,374 | +119.84% |

| Engagement Rate | 76.03% | 92.09% | +21.12% |

| Direct Sessions | 392 | 576 | +46.94% |

| Organic Search Sessions | 46 | 499 | +984.78% |

| Bing organic | see referral | 284 | second-largest source |

| Yahoo organic | 1 | 60 | +5,900% |

| DuckDuckGo organic | 0 | 36 | NEW |

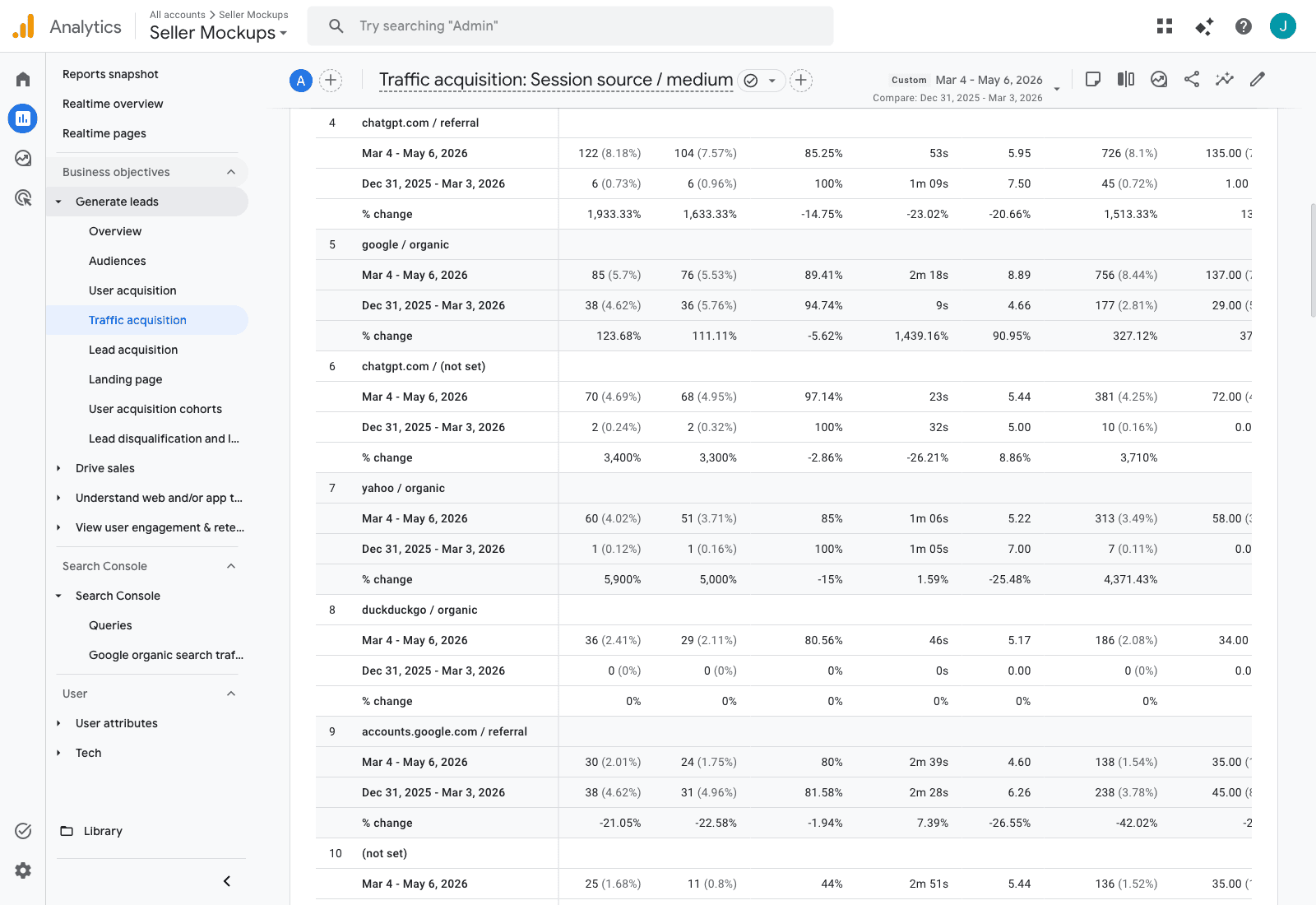

AI Platform Referrals — ChatGPT, Claude, Copilot, Perplexity

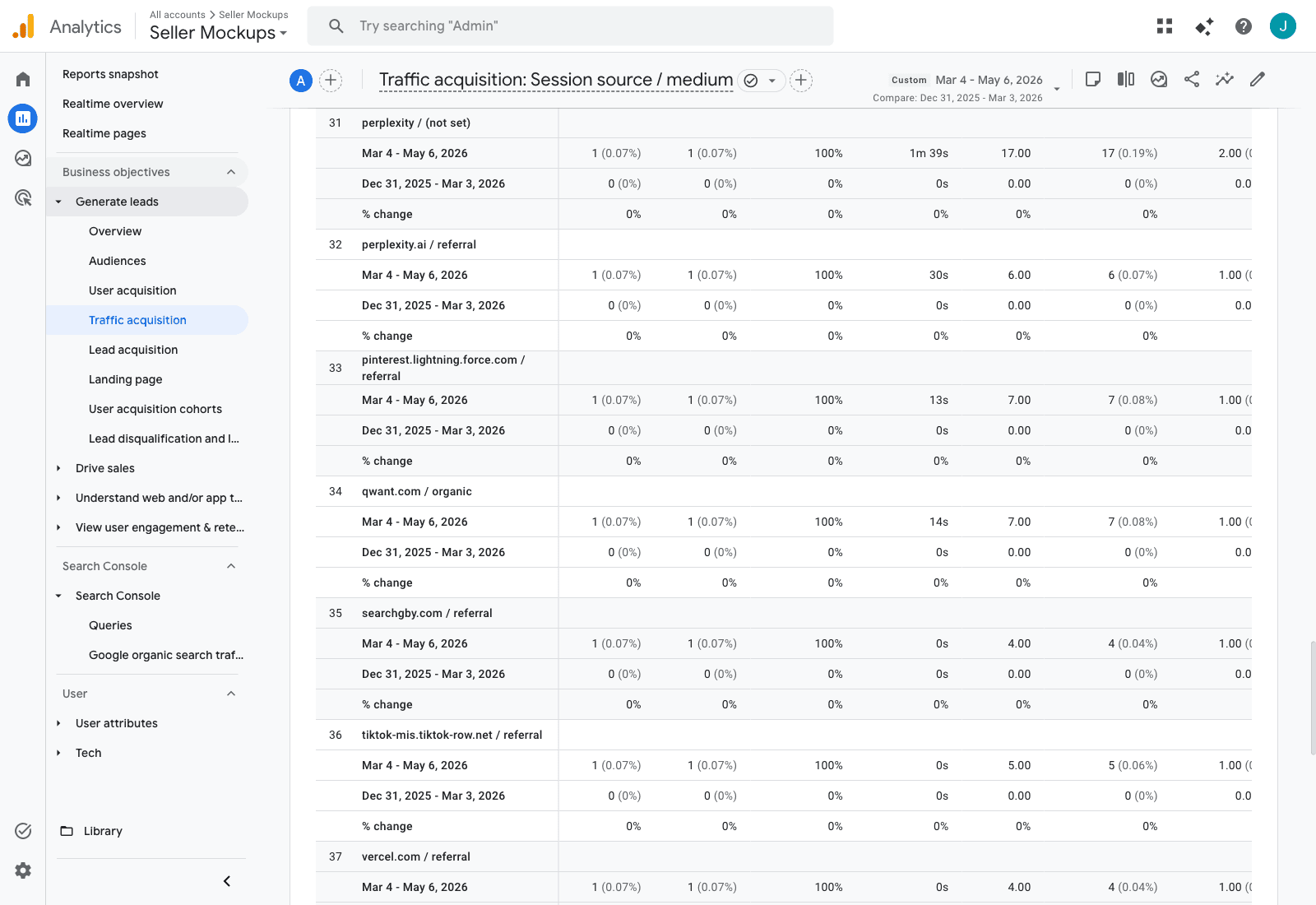

Same GA4 report, dimension switched to Session source / medium. Three rows of GA4 evidence below, with the table summary.

ChatGPT — rows 4 & 6 of GA4 source/medium report

chatgpt.com / referral +1,933.33% · chatgpt.com / (not set) +3,400%

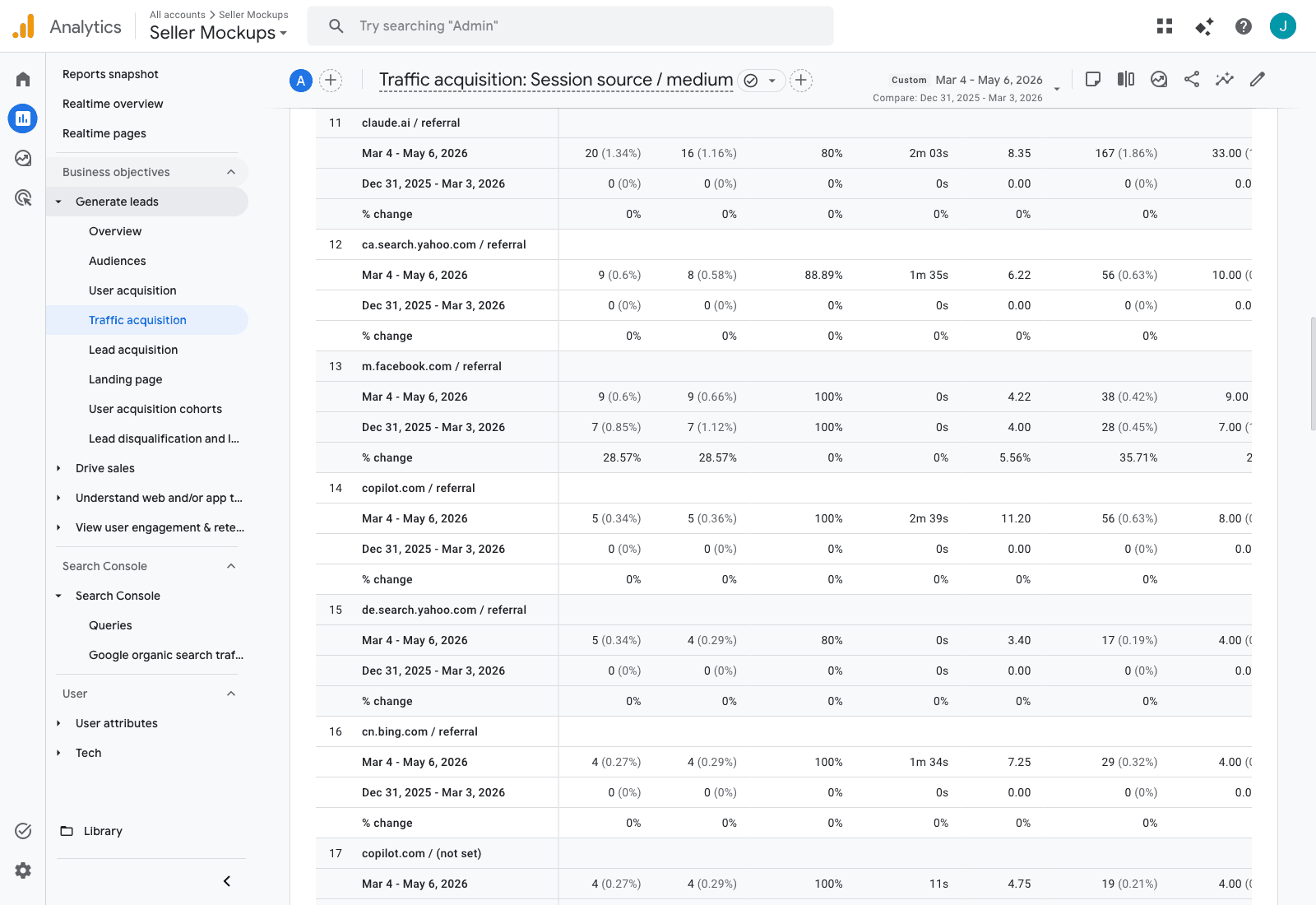

Claude.ai + Microsoft Copilot — rows 11, 14, 17 (all NEW sources, did not exist pre-audit)

claude.ai / referral — 20 sessions, 8.35 events/session, 2m 03s engagement. Microsoft Copilot — absent from launch case study, now driving recurring referrals at 100% engagement.

Perplexity — rows 31 & 32 (first-ever Perplexity citations)

Two distinct Perplexity sources, both new since the audit.

AI Source Summary — All Variants, Cumulative

| Source / Medium | Sessions | Key Events | Engagement Quality |

|---|---|---|---|

| chatgpt.com / referral | 122 | 135 | 5.95 events/session, 85% engagement |

| chatgpt.com / (not set) | 70 | 70 | 5.44 events/session, 97% engagement |

| claude.ai / referral | 20 | 33 | 8.35 events/session, 2m 03s engagement, 80% |

| copilot.com / referral | 5 | 8 | 11.20 events/session, 100% engagement · NEW |

| copilot.com / (not set) | 4 | 4 | 4.75 events/session, 100% engagement · NEW |

| perplexity.ai / referral | 1 | 1 | First-ever Perplexity citation · NEW |

| perplexity / (not set) | 1 | 2 | 100% engagement · NEW |

| Combined direct AI-platform sessions | 224 | 254 | ChatGPT 193 + Claude 20 + Copilot 9 + Perplexity 2 |

Why This Matters

Sixty-four days of data, no marketing spend changes, apples-to-apples vs the equivalent pre-audit window. 224 direct sessions from ChatGPT, Claude, Microsoft Copilot, and Perplexity, generating 254 key events. ChatGPT alone delivered 193 sessions and 206 conversions. Claude.ai visitors averaged 8.35 events per session and 2m 03s of engagement— far above any other source. Microsoft Copilot — brand-new since the launch case study — converted at 100% engagement.

Data source: Google Analytics 4 property 518992634 (SellerMockups). Date ranges: Mar 4 – May 6, 2026 (post-audit, 64 days) vs Dec 31, 2025 – Mar 3, 2026 (pre-audit, 63 days). Screenshots captured 2026-05-06 directly from GA4 web UI.

What's Next: Phase 2

The on-site technical foundation is now complete. Phase 2 focuses on the off-site brand authority signals that AI models require before they will cite SellerMockups on competitive, commercial-intent queries — the queries where new customers search.

Projected Impact of Phase 2

GEO Score projected to increase from 65.9 to 78+ (C+ to B+). Commercial-intent citation rate projected to move from 0% to 30–40%, unlocking an estimated 200–500 incremental AI-referred visits per month and $225–$750/month in new revenue from AI search traffic alone.

Brand Authority

40/100- LinkedIn company page

- G2, Trustpilot, Capterra profiles

- 10+ genuine customer reviews

E-E-A-T Signals

42/100- Founder bio with credentials

- Author bylines on 79 pages

- External source links in blog posts

Trust Signals

45/100- Customer case studies

- AggregateRating schema

- Trust badges on conversion pages

Community Presence

0/100- Reddit engagement in niche subs

- Blogger outreach for roundups

- YouTube tutorial content

Want Results Like These for Your Business?

Every audit comes with a clear, prioritized roadmap — not a 60-page PDF. Find out exactly where your business stands in AI search and what to fix first.

Questions? Email jolyn@avante.agency or call (702) 525-5958.